From Wikipedia, the free encyclopedia

This article is about large collections of data. For the band, see Big Data (band). For the practice of buying and selling of personal and consumer data, see Surveillance capitalism.

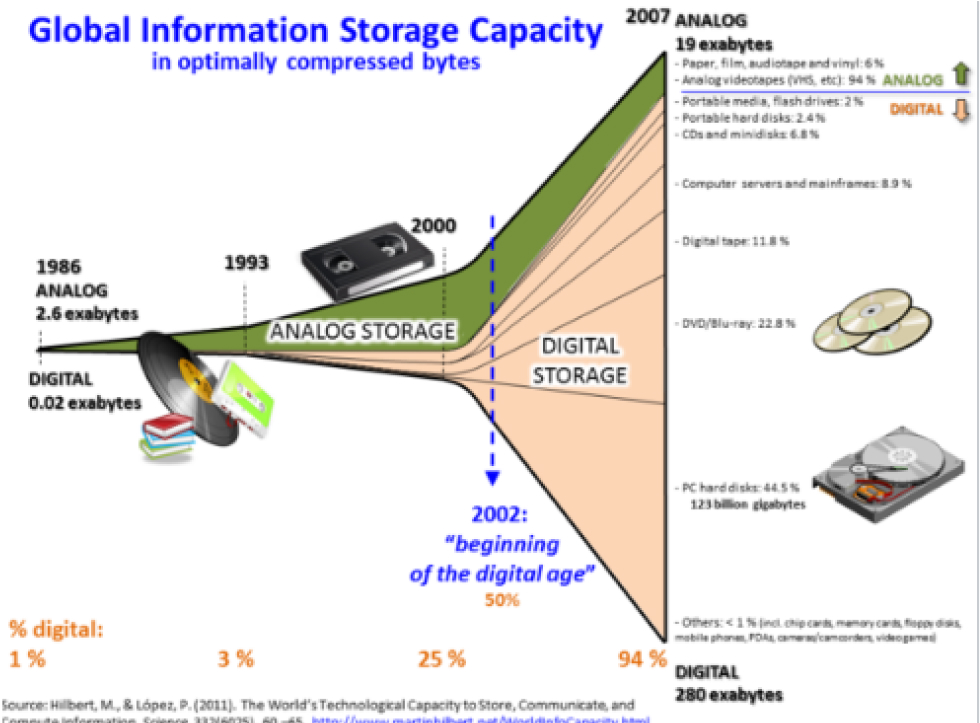

Non-linear growth of digital global information-storage capacity and the waning of analog storage[1]

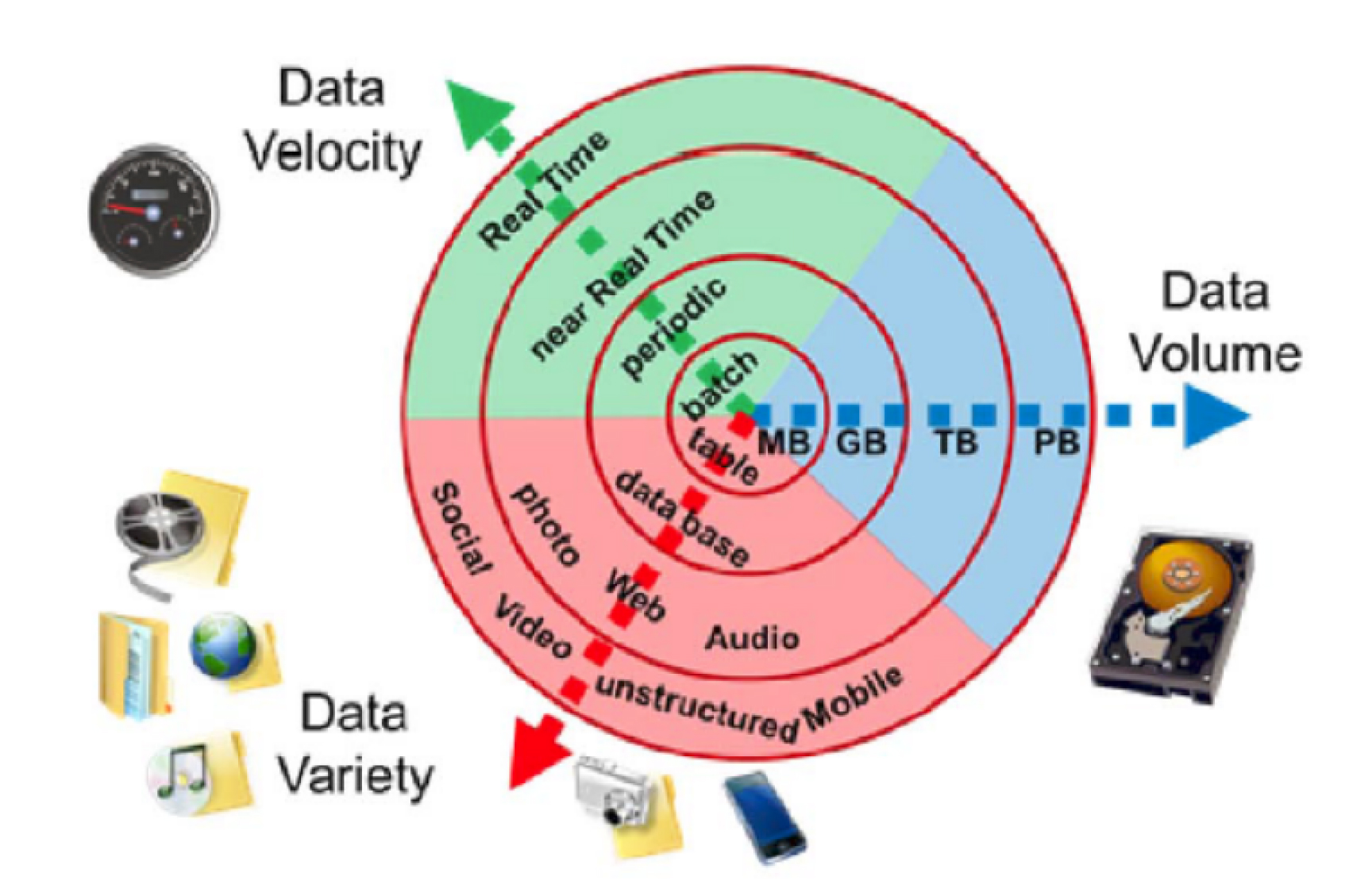

Big data refers to data sets that are too large or complex to be dealt with by traditional data-processing application software. Data with many fields (rows) offer greater statistical power, while data with higher complexity (more attributes or columns) may lead to a higher false discovery rate.[2] Big data analysis challenges include capturing data, data storage, data analysis, search, sharing, transfer, visualization, querying, updating, information privacy, and data source. Big data was originally associated with three key concepts: volume, variety, and velocity.[3] The analysis of big data presents challenges in sampling, and thus previously allowing for only observations and sampling. Thus a fourth concept, veracity, refers to the quality or insightfulness of the data. Without sufficient investment in expertise for big data veracity, then the volume and variety of data can produce costs and risks that exceed an organization’s capacity to create and capture value from big data.

The size and number of available data sets have grown rapidly as data is collected by devices such as mobile devices, cheap and numerous information-sensing Internet of things devices, aerial (remote sensing), software logs, cameras, microphones, radio-frequency identification (RFID) readers and wireless sensor networks.[9][10] The world’s technological per-capita capacity to store information has roughly doubled every 40 months since the 1980s;[11] as of 2012, every day 2.5 exabytes (2.5×260 bytes) of data are generated.[12] Based on an IDC report prediction, the global data volume was predicted to grow exponentially from 4.4 zettabytes to 44 zettabytes between 2013 and 2020. By 2025, IDC predicts there will be 163 zettabytes of data.[13] According to IDC, global spending on big data and business analytics (BDA) solutions is estimated to reach $215.7 billion in 2021.[14][15] While Statista report, the global big data market is forecasted to grow to $103 billion by 2027.[16] In 2011 McKinsey & Company reported, if US healthcare were to use big data creatively and effectively to drive efficiency and quality, the sector could create more than $300 billion in value every year.[17] In the developed economies of Europe, government administrators could save more than €100 billion ($149 billion) in operational efficiency improvements alone by using big data.[17] And users of services enabled by personal-location data could capture $600 billion in consumer surplus.[17] One question for large enterprises is determining who should own big-data initiatives that affect the entire organization.[18]

Relational database management systems and desktop statistical software packages used to visualize data often have difficulty processing and analyzing big data. The processing and analysis of big data may require “massively parallel software running on tens, hundreds, or even thousands of servers”.[19] What qualifies as “big data” varies depending on the capabilities of those analyzing it and their tools. Furthermore, expanding capabilities make big data a moving target. “For some organizations, facing hundreds of gigabytes of data for the first time may trigger a need to reconsider data management options. For others, it may take tens or hundreds of terabytes before data size becomes a significant consideration.”[20]